You know the saying: always be testing. Let's run through common pitfalls of A/B testing programs in marketing, and talk about how to avoid them.

Although marketers love to talk about A/B testing, the practice of running tests emerged from the worlds of statistics and science. There is a standard process you need to follow if you want to ensure that sick win is actually doing what you think it does.

The best way to structure an A/B test in most marketing scenarios is to create a test group and a holdout group of equal size. The test group receives the new idea you’re testing and the holdout group does not. Everything else about the two groups’ experiences remains the same.

If the test group experiences a statistically significant lift in whatever KPI metric you’re tracking, you know that the test idea you developed was almost certainly the cause. This means you can only change one thing at a time.

If you’re testing a new button color and new button copy, you don’t know if one of those elements is driving lift, or if it’s a combination of both.

The fact that lift exists may matter more than what, exactly, drove the lift for many marketers and business owners. But if you don’t know why your A/B test worked, you can’t learn from it or build upon it. The positive results quickly evaporate.

The challenge of using the test and holdout approach–and the reason it’s been left in the dust by many DTC marketers–is because you need relatively large audience sizes to make it work.

If your site only receives a few hundred visitors per day it could take months for a test to reach statistical significance.

It isn’t always realistic to run a perfectly designed holdout test. And that is why the next point is so important…

If you want your A/B testing efforts to ladder up to real growth in revenue, you need a strategy. And a list of test ideas from a viral listicle or Twitter thread is not a strategy.

This is especially important for smaller brands who don’t yet have the scale to run multiple holdout tests per month. To make an impact, these brands may have to change almost everything about the subject of their test.

For example: testing a brand new ad hook or landing page concept. If the new version of the asset isn’t built around a clear hypothesis about how the customer thinks and acts, it isn’t going to work.

Testing isn’t a blunt instrument for making channel performance improve. It’s a process for learning more about your customers. Speak to the customer and their needs better, and sales go up.

A solid set of testing hypotheses comes from a deep understanding of the customer and the way he or she uses and perceives your product category. This understanding is the product of experience in the industry and customer research.

You should think of your A/B testing program like the game Battleship: a major customer insight is somewhere on the board, and each test is a guess that helps you narrow down its position. Eventually, you hit on something.

What happens when you do finally identify a winning test idea? If you don’t have a plan, you won’t realize any of the potential upside you just uncovered.

If there are a lot of cooks in the kitchen–agencies, web developers, creatives–the win may not be implemented at all. But even if the winning variation is published…how do you know it’s still working? Especially after a year or more?

The lift that you experience from most of your A/B testing wins decays over time. The composition of the audience viewing your site or your marketing changes, and the “win” becomes less effective to those who have seen it already.

This is why it seems like you’re constantly testing, but never really getting ahead. In a best-case scenario, you would re-test your biggest wins quarterly to check if they were still working.

You could also run an evergreen holdout group of 5-10% of your audience to track the total impact of the win over time.

A successful A/B testing program makes a measurable impact on your bottom line, but it also leads to a better understanding of your customers and their relationship with your brand and products. Here are 4 steps you can take to ensure your testing program is successful:

One of the biggest misconceptions in A/B testing, and in eCommerce generally, is that “make sales go up” is a strategic goal. Yes, growth is the outcome that most of us are shooting for. But you need to develop some guidelines around who you are targeting and how you’re willing to speak to them.

As much as I love Twitter, the trend of “building in public” is one of the biggest drivers of unfocused testing. A highly respected marketer will Tweet out a win, and then many followers are tempted to drop everything and try it for their own brand.

But the success of any given tactic depends on the product, audience and business model of the brand testing it. That’s why every testing program needs a set of criteria that will enable the team to evaluate the relevance of any given test idea.

I’m not saying that someone else’s win is worth trying, but you need to weigh it against the rest of your own strategic priorities.

A framework for a good strategic goal: Make sales go up by targeting [specific audience] and [making a change to their current experience] to [impact a specific KPI].

For example:

Once you’ve landed on a focused strategic goal, your next step is determining which A/B tests to run and prioritizing them. At this stage, many marketers will be tempted to write out a list of hunches they have always wanted to test. But you’ll have a higher success rate if you perform some customer and/or user research and let that inform your testing agenda.

Some ways you can perform research:

When you define a hypothesis, you want to know if making a specific change to the audience’s experience will result in a specific change to the audience’s behavior.

Yes, the overall goal is improving sales, but there are multiple levers that can achieve that goal. For example, improving an ad’s clickthrough rate, reducing the cost per impression, or increasing conversion rate could all improve the ad’s efficiency.

If you run an A/B test that was supposed to increase conversion rate, but it improved clickthrough rate instead, that’s only partially a win. You improved performance, but you don’t really know why or how. So that learning can’t be applied elsewhere, and it’s less likely to work again in the future.

You also need a test setup that truly measures incremental revenue: sales that would not have occurred in absence of the test. This takes some extra effort, but it’s the only way to know for sure if your test is making sales increase.

Otherwise you may wind up with a list of “wins” and tepid sales performance. This will harm the credibility of your A/B testing program.

The breadth and ambition of your A/B testing program should match the support resources you can dedicate to the program. If tests prevent users from checking out, or tests in different channels run concurrently, or the results aren’t recorded accurately, all your effort will amount to nothing.

Getting it right takes time. If your testing program is a one-man or one-woman show, ramp up the volume of tests slowly and bring in an extra set of eyes to QA your setup before you launch.

If you’re fortunate enough to have a multi-person team at your disposal, invest in a project manager to ensure that tests don’t conflict with one another and the results are shared out in a standardized format.

Most of what I’ve written here is antithetical to “best practices” that have developed around Facebook account management for direct response marketers. There are two reasons for that.

The first is that Facebook is a complex ecosystem where it’s difficult to create a controlled A/B test environment. You are contending with creative, targeting, who is in Facebook’s active user base on a given day and the level of competition in the ads marketplace. Those are a lot of variables to control for compared to a more straightforward channel like email.

Running “the perfect test” on Facebook is possible, but it requires more time and budget than many brands can afford. And the insights won’t come fast enough to maintain campaign profitability in a rapidly shifting environment. So I’m not telling you to stop testing your ads on Facebook because you can’t do it perfectly every time–that isn’t realistic.

The second reason is that rapid iteration and A/B testing on Facebook leads to precisely the outcomes I’m warning against–you don’t get a true picture of “what works” and your ad account performance is inconsistent. In many cases it feels like you’re always one step behind–what worked amazingly well yesterday completely tanks today.

Some of those outcomes may be unavoidable because Facebook as a platform is so volatile. But it’s worthwhile to pursue a mix of structured testing, as outlined here, and more rapid iteration.

You can use learnings from A/B tests you run in other channels to shape the testing agenda for Facebook ads and run more structured tests on your Facebook landing pages to get more of a concrete understanding of what your audience wants. You may never feel like you have a complete handle on “what works” for your brand on Facebook, but taking these steps will make your testing feel like less of a shot in the dark.

Want to run more efficient A/B tests? Give Triple Whale a try. We give Shopify brands the ability to run meaningful, actionable creative tests at scale.

You know the saying: always be testing. Let's run through common pitfalls of A/B testing programs in marketing, and talk about how to avoid them.

Although marketers love to talk about A/B testing, the practice of running tests emerged from the worlds of statistics and science. There is a standard process you need to follow if you want to ensure that sick win is actually doing what you think it does.

The best way to structure an A/B test in most marketing scenarios is to create a test group and a holdout group of equal size. The test group receives the new idea you’re testing and the holdout group does not. Everything else about the two groups’ experiences remains the same.

If the test group experiences a statistically significant lift in whatever KPI metric you’re tracking, you know that the test idea you developed was almost certainly the cause. This means you can only change one thing at a time.

If you’re testing a new button color and new button copy, you don’t know if one of those elements is driving lift, or if it’s a combination of both.

The fact that lift exists may matter more than what, exactly, drove the lift for many marketers and business owners. But if you don’t know why your A/B test worked, you can’t learn from it or build upon it. The positive results quickly evaporate.

The challenge of using the test and holdout approach–and the reason it’s been left in the dust by many DTC marketers–is because you need relatively large audience sizes to make it work.

If your site only receives a few hundred visitors per day it could take months for a test to reach statistical significance.

It isn’t always realistic to run a perfectly designed holdout test. And that is why the next point is so important…

If you want your A/B testing efforts to ladder up to real growth in revenue, you need a strategy. And a list of test ideas from a viral listicle or Twitter thread is not a strategy.

This is especially important for smaller brands who don’t yet have the scale to run multiple holdout tests per month. To make an impact, these brands may have to change almost everything about the subject of their test.

For example: testing a brand new ad hook or landing page concept. If the new version of the asset isn’t built around a clear hypothesis about how the customer thinks and acts, it isn’t going to work.

Testing isn’t a blunt instrument for making channel performance improve. It’s a process for learning more about your customers. Speak to the customer and their needs better, and sales go up.

A solid set of testing hypotheses comes from a deep understanding of the customer and the way he or she uses and perceives your product category. This understanding is the product of experience in the industry and customer research.

You should think of your A/B testing program like the game Battleship: a major customer insight is somewhere on the board, and each test is a guess that helps you narrow down its position. Eventually, you hit on something.

What happens when you do finally identify a winning test idea? If you don’t have a plan, you won’t realize any of the potential upside you just uncovered.

If there are a lot of cooks in the kitchen–agencies, web developers, creatives–the win may not be implemented at all. But even if the winning variation is published…how do you know it’s still working? Especially after a year or more?

The lift that you experience from most of your A/B testing wins decays over time. The composition of the audience viewing your site or your marketing changes, and the “win” becomes less effective to those who have seen it already.

This is why it seems like you’re constantly testing, but never really getting ahead. In a best-case scenario, you would re-test your biggest wins quarterly to check if they were still working.

You could also run an evergreen holdout group of 5-10% of your audience to track the total impact of the win over time.

A successful A/B testing program makes a measurable impact on your bottom line, but it also leads to a better understanding of your customers and their relationship with your brand and products. Here are 4 steps you can take to ensure your testing program is successful:

One of the biggest misconceptions in A/B testing, and in eCommerce generally, is that “make sales go up” is a strategic goal. Yes, growth is the outcome that most of us are shooting for. But you need to develop some guidelines around who you are targeting and how you’re willing to speak to them.

As much as I love Twitter, the trend of “building in public” is one of the biggest drivers of unfocused testing. A highly respected marketer will Tweet out a win, and then many followers are tempted to drop everything and try it for their own brand.

But the success of any given tactic depends on the product, audience and business model of the brand testing it. That’s why every testing program needs a set of criteria that will enable the team to evaluate the relevance of any given test idea.

I’m not saying that someone else’s win is worth trying, but you need to weigh it against the rest of your own strategic priorities.

A framework for a good strategic goal: Make sales go up by targeting [specific audience] and [making a change to their current experience] to [impact a specific KPI].

For example:

Once you’ve landed on a focused strategic goal, your next step is determining which A/B tests to run and prioritizing them. At this stage, many marketers will be tempted to write out a list of hunches they have always wanted to test. But you’ll have a higher success rate if you perform some customer and/or user research and let that inform your testing agenda.

Some ways you can perform research:

When you define a hypothesis, you want to know if making a specific change to the audience’s experience will result in a specific change to the audience’s behavior.

Yes, the overall goal is improving sales, but there are multiple levers that can achieve that goal. For example, improving an ad’s clickthrough rate, reducing the cost per impression, or increasing conversion rate could all improve the ad’s efficiency.

If you run an A/B test that was supposed to increase conversion rate, but it improved clickthrough rate instead, that’s only partially a win. You improved performance, but you don’t really know why or how. So that learning can’t be applied elsewhere, and it’s less likely to work again in the future.

You also need a test setup that truly measures incremental revenue: sales that would not have occurred in absence of the test. This takes some extra effort, but it’s the only way to know for sure if your test is making sales increase.

Otherwise you may wind up with a list of “wins” and tepid sales performance. This will harm the credibility of your A/B testing program.

The breadth and ambition of your A/B testing program should match the support resources you can dedicate to the program. If tests prevent users from checking out, or tests in different channels run concurrently, or the results aren’t recorded accurately, all your effort will amount to nothing.

Getting it right takes time. If your testing program is a one-man or one-woman show, ramp up the volume of tests slowly and bring in an extra set of eyes to QA your setup before you launch.

If you’re fortunate enough to have a multi-person team at your disposal, invest in a project manager to ensure that tests don’t conflict with one another and the results are shared out in a standardized format.

Most of what I’ve written here is antithetical to “best practices” that have developed around Facebook account management for direct response marketers. There are two reasons for that.

The first is that Facebook is a complex ecosystem where it’s difficult to create a controlled A/B test environment. You are contending with creative, targeting, who is in Facebook’s active user base on a given day and the level of competition in the ads marketplace. Those are a lot of variables to control for compared to a more straightforward channel like email.

Running “the perfect test” on Facebook is possible, but it requires more time and budget than many brands can afford. And the insights won’t come fast enough to maintain campaign profitability in a rapidly shifting environment. So I’m not telling you to stop testing your ads on Facebook because you can’t do it perfectly every time–that isn’t realistic.

The second reason is that rapid iteration and A/B testing on Facebook leads to precisely the outcomes I’m warning against–you don’t get a true picture of “what works” and your ad account performance is inconsistent. In many cases it feels like you’re always one step behind–what worked amazingly well yesterday completely tanks today.

Some of those outcomes may be unavoidable because Facebook as a platform is so volatile. But it’s worthwhile to pursue a mix of structured testing, as outlined here, and more rapid iteration.

You can use learnings from A/B tests you run in other channels to shape the testing agenda for Facebook ads and run more structured tests on your Facebook landing pages to get more of a concrete understanding of what your audience wants. You may never feel like you have a complete handle on “what works” for your brand on Facebook, but taking these steps will make your testing feel like less of a shot in the dark.

Want to run more efficient A/B tests? Give Triple Whale a try. We give Shopify brands the ability to run meaningful, actionable creative tests at scale.

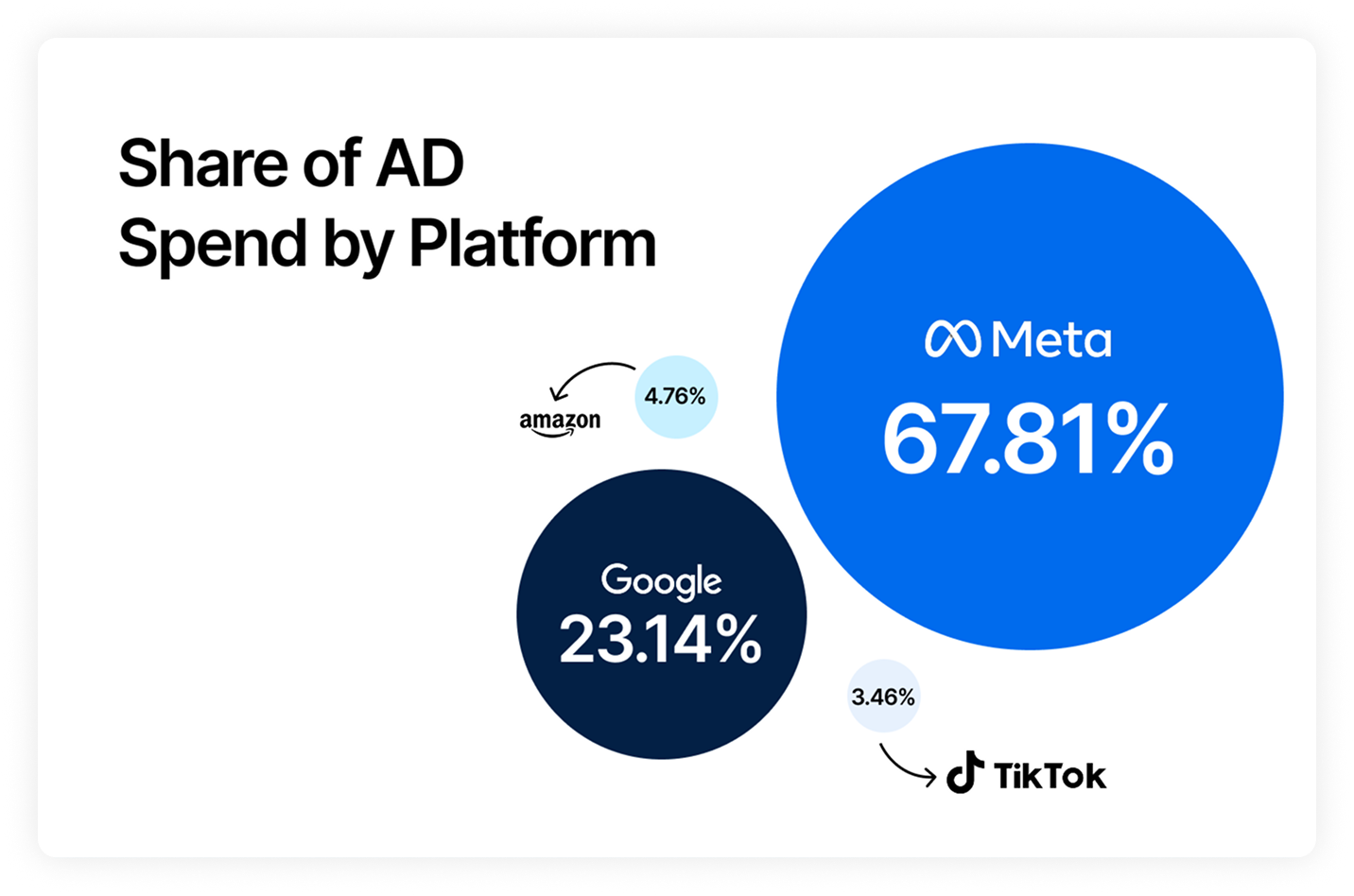

Body Copy: The following benchmarks compare advertising metrics from April 1-17 to the previous period. Considering President Trump first unveiled his tariffs on April 2, the timing corresponds with potential changes in advertising behavior among ecommerce brands (though it isn’t necessarily correlated).